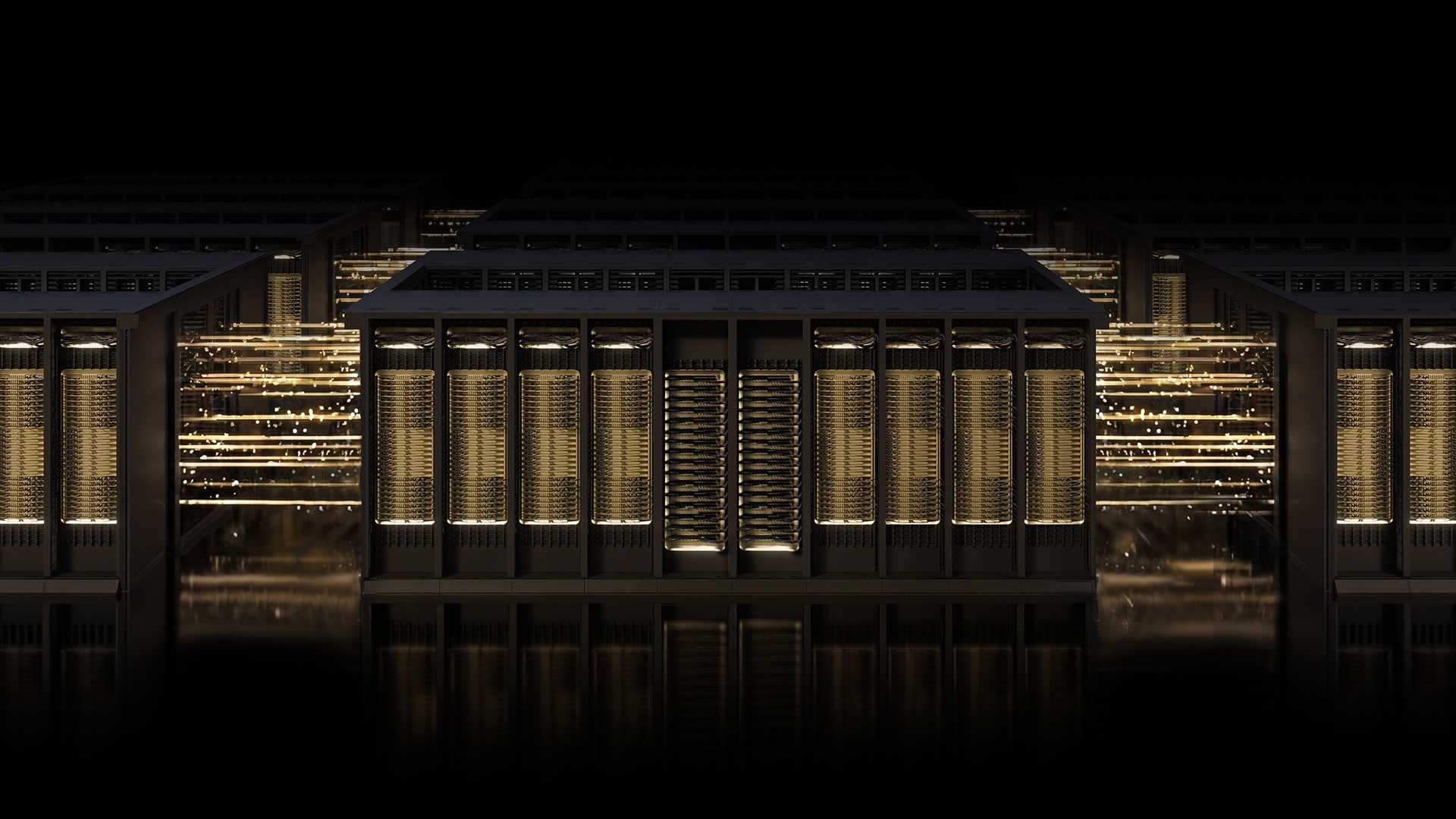

NVIDIA Spectrum-X Ethernet and MRC: Revolutionizing AI Network Infrastructure

In the rapidly evolving landscape of AI, network infrastructure is the backbone that enables massive-scale training. NVIDIA's Spectrum-X Ethernet fabric, enhanced with the new Multipath Reliable Connection (MRC) protocol, is setting a new standard for performance, resilience, and scalability. This Q&A explores the key aspects of this technology and its impact on leading AI factories.

What is NVIDIA Spectrum-X Ethernet and who uses it?

NVIDIA Spectrum-X is an open, AI-native Ethernet networking platform designed specifically for high-performance AI workloads. It combines purpose-built hardware, deep telemetry, and intelligent fabric control to deliver extreme throughput, low latency, and robust reliability. Unlike standard Ethernet, Spectrum-X is optimized for the unique demands of gigascale AI training, where even minor network hiccups can waste millions in GPU compute time. Industry leaders such as OpenAI, Microsoft, and Oracle have deployed Spectrum-X in their largest AI factories. For example, Microsoft's Fairwater and Oracle Cloud Infrastructure's Abilene data centers—two of the world's most advanced AI facilities—rely on this technology to host and train frontier large language models. These companies choose Spectrum-X because it minimizes network bottlenecks, maximizes GPU utilization, and scales efficiently to thousands of nodes, making it the foundation for their most demanding AI projects.

What is Multipath Reliable Connection (MRC) and how does it work?

Multipath Reliable Connection (MRC) is an RDMA transport protocol co-developed by NVIDIA, Microsoft, and OpenAI. It allows a single RDMA connection to spread traffic across multiple network paths simultaneously. Think of it as replacing a single-lane road with a smart grid system where traffic flows adaptively: if one route gets congested or fails, data is instantly rerouted via alternative paths. MRC achieves this by load-balancing connections across all available links, dynamically avoiding overloaded routes in real time. When packet loss occurs—inevitable in large networks—intelligent retransmission recovers lost data swiftly and precisely without causing major delays. This approach keeps GPU clusters fed with data continuously, preventing idle time and maintaining high training efficiency. MRC was first proven in production on NVIDIA Spectrum-X hardware and has now been released as an open specification through the Open Compute Project, encouraging broader adoption and innovation.

How does MRC improve performance for AI training?

MRC significantly enhances AI training performance by ensuring all available network bandwidth is utilized effectively. During a training run, GPUs need a steady stream of data—any slowdown starves them and wastes compute cycles. MRC distributes traffic across multiple paths, preventing any single link from becoming a bottleneck. This load balancing maintains high GPU utilization throughout the entire job, even under heavy congestion. Additionally, MRC's real-time path selection adapts to network conditions, dynamically shifting traffic away from overloaded routes. If a short-lived interruption causes data loss, MRC's intelligent retransmission recovers fast enough to avoid stalling GPU operations. The net result is fewer interruptions, higher throughput, and more efficient training of massive models. According to OpenAI's Sachin Katti, deploying MRC in the Blackwell generation allowed them to avoid typical network-related slowdowns, maintaining the efficiency of frontier training runs at scale. This performance gain directly translates to faster model development and reduced costs for AI companies.

What are the benefits of MRC being an open specification under OCP?

By releasing MRC as an open specification through the Open Compute Project (OCP), NVIDIA and its collaborators promote industry-wide innovation and interoperability. Open standards like MRC allow multiple vendors to implement the protocol, fostering a competitive ecosystem that drives down costs and increases performance. For AI operators, this means they are not locked into a single hardware supplier; they can mix and match compatible networking gear while still benefiting from MRC's advanced features. Additionally, openness accelerates community contributions—other researchers, engineers, and companies can propose improvements or build complementary tools. The transparency also simplifies integration into diverse environments, from cloud data centers to on-premise AI factories. Overall, MRC's open nature aligns with OCP's mission to make infrastructure more efficient, scalable, and accessible, ensuring that breakthroughs in AI networking are not proprietary but shared for collective progress. This move encourages broader adoption and helps standardize the way high-performance AI networks are built globally.

How do OpenAI, Microsoft, and Oracle benefit from MRC in their AI factories?

OpenAI, Microsoft, and Oracle each operate massive AI factories purpose-built for training frontier models. Microsoft's Fairwater and Oracle Cloud Infrastructure's Abilene are among the largest such facilities, and they rely on MRC to achieve the performance, scale, and efficiency required. For OpenAI, MRC enabled the successful deployment of the Blackwell generation, avoiding network-related slowdowns that could otherwise interrupt long training runs. Microsoft leverages its long-standing collaboration with NVIDIA to integrate MRC deeply into its infrastructure, ensuring that Azure's AI services remain competitive and reliable. Oracle uses MRC in OCI's Abilene data center to offer customers a high-performance, low-latency networking environment for large-scale LLM training. In all cases, MRC helps maintain GPU utilization close to 100%, reduces job completion times, and simplifies network management through granular traffic visibility. The result is faster innovation cycles for AI models and more predictable performance for clients, solidifying these companies' leadership in the AI industry.

What makes Spectrum-X Ethernet the foundation for gigascale AI?

NVIDIA Spectrum-X Ethernet stands out for AI because it is purpose-built from the ground up for the unique traffic patterns and demands of distributed training. The platform integrates three key elements: custom hardware with high-speed ports and advanced packet processing, deep telemetry that monitors every flow in real time, and intelligent fabric control that makes automated decisions to optimize performance. Together, these components allow Spectrum-X to handle the extreme data rates and congestion sensitivity of AI workloads. The addition of MRC further enhances its capability by providing multipath load balancing and rapid loss recovery. Administrators gain fine-grained visibility over traffic paths, simplifying troubleshooting and capacity planning. Because it is an open Ethernet standard, Spectrum-X interoperates with existing infrastructure while delivering AI-optimized performance. This combination makes it the go-to choice for building gigascale AI factories, as demonstrated by its adoption by industry leaders like OpenAI, Microsoft, and Oracle.

Related Articles

- Why a $20 Ethernet Cable Tester Could Be Your Best Network Investment

- How to Navigate the OnePlus Pad 4 Launch: Specs, Downgrade, and Purchase Tips

- The Ten Pillars of 6G: Key Technologies Driving the Next Wireless Revolution

- OnePlus Pad 4 Unveiled With Snapdragon 8 Elite Gen 5: Key Downgrade and Uncertain Release Raise Concerns

- PCIe 8.0 Draft Unveiled: 1 TB/s, 0.5V Signaling, and Next-Gen Connectors

- Enhancing Man Pages with Practical Examples: A Look at tcpdump and dig

- Unlock Your Home Network's Potential with a $15 Raspberry Pi

- 10 Reasons to Grab the AdGuard VPN 5-Year Plan for $40