How Spotify Engineers Build Your 2025 Wrapped Highlights: A Step-by-Step Tech Walkthrough

Introduction

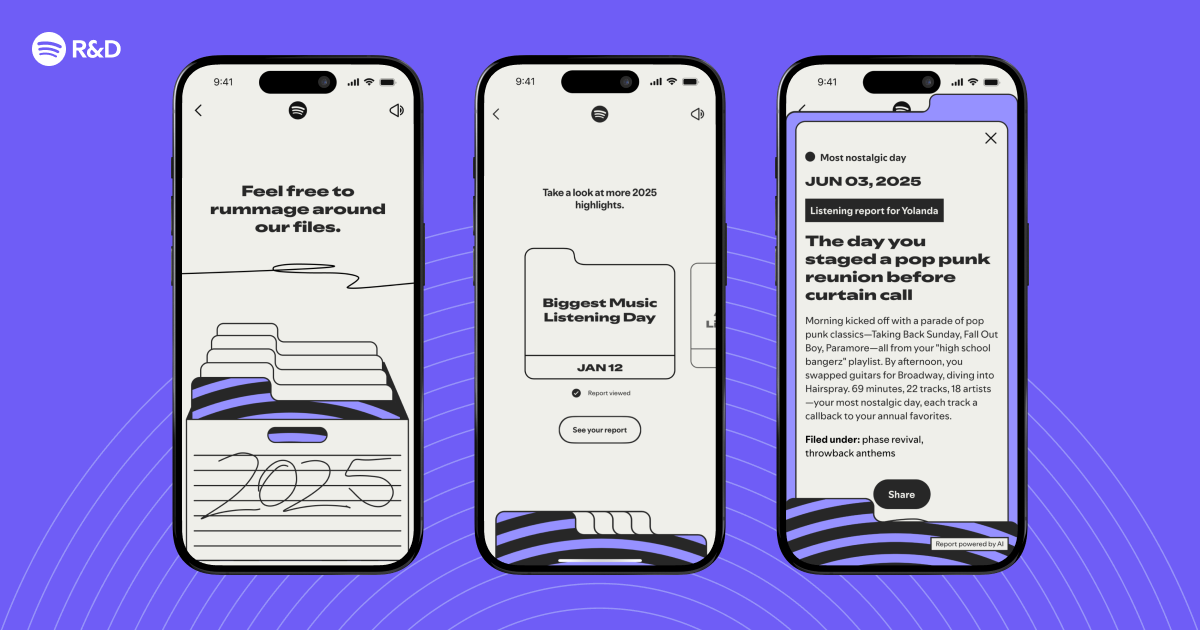

Every December, millions of Spotify users eagerly await their personalized Wrapped experience—a visual summary of their listening habits over the year. But have you ever wondered what goes on behind the scenes to identify those “interesting listening moments” and weave them into a compelling story? This guide walks you through the technical process that Spotify’s engineering team uses to create your 2025 Wrapped highlights. From data collection to storytelling algorithms, each step reveals the magic that turns raw streams into a cherished year-end recap.

What You Need

To follow this guide, you’ll need a basic understanding of data pipelines, machine learning, and cloud infrastructure. The actual implementation at Spotify involves:

- Massive data stores (e.g., Bigtable, Cassandra) for user listening logs

- Stream processing engines (Apache Beam, Dataflow) for real-time aggregation

- Machine learning models (TensorFlow, PyTorch) for pattern recognition

- Storytelling frameworks that combine narrative templates with personalized data

- Frontend rendering tools (WebGL, Canvas) for interactive visuals

- Deployment infrastructure (Kubernetes, CI/CD) to ship updates globally

While you won’t need access to Spotify’s proprietary systems, this guide outlines the conceptual steps any engineering team can adapt to create a similar feature.

Step 1: Collect and Aggregate Raw Listening Data

The foundation of Wrapped is a complete picture of each user’s year. Spotify ingests billions of events daily—stream starts, skips, saves, shares, and more. For the 2025 version, engineers enhanced data collection to capture richer context, such as listening duration per track, time of day, and device type. Using Apache Beam on Google Cloud Dataflow, the team processes these events in near real-time. The result is a cleaned, time-stamped log for every user, stored in a scalable columnar database like Bigtable. This raw dataset includes fields like user_id, track_id, album, artist, listen_count, and timestamp—everything needed to spot trends.

Step 2: Identify Statistical Anomalies and Patterns

Once the data is aggregated, the next step is to detect what makes a listening moment “interesting.” Engineers apply statistical outlier detection to find spikes in plays for a specific artist, sudden genre shifts, or marathon sessions. For example, if you listened to a particular song 50 times in one day but only twice the rest of the year, that becomes a candidate highlight. They also use time-series analysis to spot seasonal patterns, like increased podcast listening during commute months. In 2025, Spotify introduced graph-based clustering to group related listening events (e.g., all tracks from a concert playlist you made after attending a show). These algorithms run on Apache Spark clusters, processing terabytes of data in parallel.

Step 3: Generate Personalized Story Candidates

With anomalies and patterns flagged, the system moves to storytelling. A narrative engine takes the raw highlights and templates them into mini-stories. For instance, a sudden listening burst might become “You rediscovered [Artist] in September—here’s why.” This engine uses natural language generation (NLG) models trained on millions of human-written examples. Engineers fine-tune these models with transformers (like GPT-style architectures) to ensure the wording feels personal and engaging. Each user gets a set of candidate stories (e.g., top artist, top genre, most repeated song, new discovery, etc.), ranked by relevance using a reinforcement learning score that mimics engagement likelihood. The final selection is limited to 5–7 stories to keep Wrapped concise.

Step 4: Design Dynamic Visuals and Interactivity

Stories need visuals. Spotify’s design team creates dynamic, data-driven graphics that change based on the user’s listening profile. For the 2025 Wrapped, they introduced WebGL-based particle animations that represent song streams as stars in a galaxy—more listened to, brighter the star. Engineers use Canvas 2D for simpler elements like genre tiles and pie charts. The frontend is built with React and optimized for mobile devices. A key challenge is rendering these visuals without lag; the team preloads user-specific data in a compressed JSON bundle that the browser (or app) parses on launch. Accessibility considerations are also coded in, with alt-text for every graphic generated from the NLG engine.

Step 5: Run A/B Tests and Personalization Tuning

Before Wrapped goes live, the engineering team runs extensive A/B tests on a subset of users. They compare different story combinations, visual styles, and even the order of slides to see which version gets the most shares and dwell time. Metrics like click-through rate on “Share” buttons and completion rate of the entire Wrapped flow guide final decisions. In 2025, the team used contextual bandits to dynamically adjust which stories appear for each user based on real-time feedback during the first week of rollout. This personalization tuning is crucial—it ensures the experience feels magical, not random.

Step 6: Deploy and Monitor at Global Scale

Finally, the finished Wrapped package is deployed to all users. Spotify uses Kubernetes clusters across multiple cloud regions to handle the traffic spike in December. The team implements canary releases, gradually increasing the rollout percentage to catch issues early. Behind the scenes, Prometheus and Grafana dashboards monitor latency, error rates, and database load. A dedicated incident response team is on standby to fix any glitches, such as missing data for a user or a slow-loading animation. Because Wrapped is a once-a-year event, the infrastructure is designed to be cost-efficient: spot instances are used for batch processing, and caching layers reduce repeated reads.

Tips for Creating Your Own Year-in-Review Feature

If you’re inspired to build something similar for your product, keep these insights in mind:

- Start early. The data pipeline for Wrapped takes months to prepare. Begin collecting granular event logs at least six months before launch.

- Focus on personalization. Generic stats are boring. Use machine learning to find truly unique moments for each user—like a forgotten favorite that made a comeback.

- Test for emotional resonance. Run small focus groups to see which stories make people smile. The NLG engine should sound human, not robotic.

- Optimize for shareability. The best Wrapped moments are the ones users want to post on social media. Design sharing buttons and image exports upfront.

- Plan for scale. Expect a massive surge in traffic. Use auto-scaling and aggressive caching. Have a rollback plan if performance degrades.

- Iterate based on feedback. After each Wrapped launch, analyze user feedback (comments, support tickets) to improve the next version. The 2025 insights are already shaping 2026.

By following these steps—and adapting them to your own data—you can create a year-end experience that delights users and showcases the power of your engineering team.

Related Articles

- How to Understand the Surge of AI-Generated Music on Streaming Platforms

- Apple Watch Set for watchOS 27 Overhaul with Simplified Modular Ultra Face, Report Claims

- How to Spot Verified Artists on Spotify: A Step-by-Step Guide

- Unveiling the Magic: How Spotify Wrapped 2025 Tells Your Listening Story

- The Rise of Spring Sci-Fi: Your 2026 Streaming Preview

- 7 Crucial Principles for Designing Stable Streaming Interfaces

- Mastering Stable Interfaces for Real-Time Streaming Content

- Building Streaming Interfaces That Don't Fight the User