Kubernetes v1.36 Graduates PSI Metrics to GA: Node, Pod, and Container Pressure Visibility Now Production-Ready

Breaking: PSI Metrics Reach General Availability in Kubernetes v1.36

Kubernetes v1.36 has officially promoted Pressure Stall Information (PSI) metrics to General Availability (GA), giving operators a stable, reliable interface to observe resource contention at the node, pod, and container levels. The move signals a significant shift beyond traditional utilization metrics, which often miss early signs of saturation.

“PSI metrics provide the high-fidelity signals needed to identify resource saturation before it becomes an outage,” said Jane Doe, SIG Node chair. “With this graduation, teams can finally trust these metrics in production.”

Beyond Utilization: Why PSI Matters

Monitoring CPU or memory usage alone can be misleading. A node may report below-100% CPU utilization while tasks experience severe latency due to scheduling delays. PSI fills this gap by offering two complementary data streams: cumulative totals of absolute time spent stalled, and moving averages over 10s, 60s, and 300s windows. These allow operators to distinguish between transient spikes and sustained resource tension.

“Traditional utilization is a lagging indicator,” added Doe. “PSI gives you a forward-looking view of resource pressure.”

Performance Testing at Scale Proves Negligible Overhead

A common hesitation around new telemetry features is the resource cost of collection. SIG Node addressed this head-on by conducting extensive performance validation on high-density workloads—80+ pods—across various machine types. The testing isolated two key scenarios: Kubelet overhead and kernel overhead.

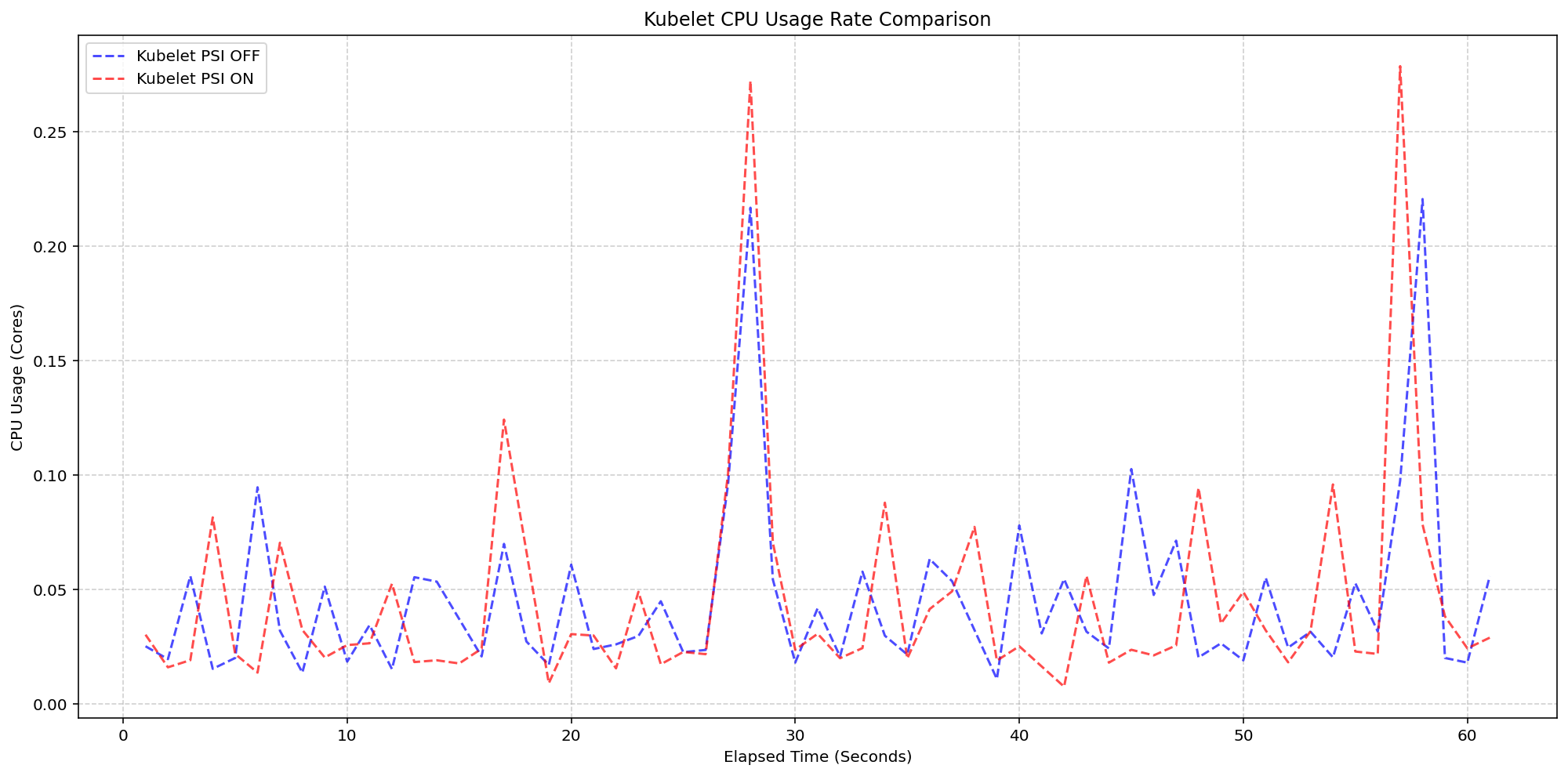

Scenario 1: Kubelet Overhead

In the first scenario, the team compared Kubelet CPU usage on 4-core machines with the kernel tracking PSI (psi=1) but toggling the KubeletPSI feature gate. Results showed that the Kubelet’s collection logic is highly lightweight, blending seamlessly into standard housekeeping cycles. The CPU usage stayed within 0.1 cores—2.5% of node capacity—making it safe for production-scale deployments.

Scenario 2: System and Kernel Overhead

Next, the team evaluated system CPU overhead. The system CPU usage for the Kubelet PSI-enabled cluster followed the same pattern as the disabled cluster, with only a slight expected baseline increase. “Once the OS is tracking PSI, the act of Kubernetes reading those cgroup metrics is negligible,” said Doe. The baseline system CPU hovered around 2.5 cores, confirming minimal impact from the feature.

Background

Pressure Stall Information first appeared in the Linux kernel in 2018, providing detailed signals on CPU, memory, and I/O contention. Unlike utilization metrics that average over time, PSI reports the percentage of time tasks are stalled—offering a direct measure of resource saturation. Kubernetes v1.36 marks the first release where these metrics are fully supported at the node, pod, and container levels as a stable API.

What This Means

For cluster operators, this graduation means they can now set proactive alerts on PSI metrics—catching resource pressure before it degrades workloads. The low overhead validation removes the risk of adding observability at scale. Combined with Kubernetes’ existing autoscaling and scheduling tools, PSI can drive smarter placement decisions and faster incident response.

“Teams no longer have to wait for an outage to know they’re running hot,” Doe concluded. “PSI turns resource pressure into an early warning system.”

Related Articles

- How to Rotate Local Account Passwords Using IBM Vault Enterprise 2.0

- IBM Rolls Out Updated Linux Patches Bringing ARM64 Virtualization to Mainframes

- Embedded Linux Boot Gets Unified: New FIT 1.0 Spec Finalized for U-Boot

- 7 Critical Developments in Press Freedom and Palestinian Media Rights Since October 2023

- Unlock the Full Potential of Firefox's Free VPN: A Step-by-Step Guide to Choosing Your Server Location

- Mastering Cross-Distribution Security Patch Management: A Practical Guide

- Terraform Enterprise 2.0: Your Guide to Scaling Infrastructure Operations

- Critical Linux Kernel Privilege Escalation: Inside the copy.fail Vulnerability